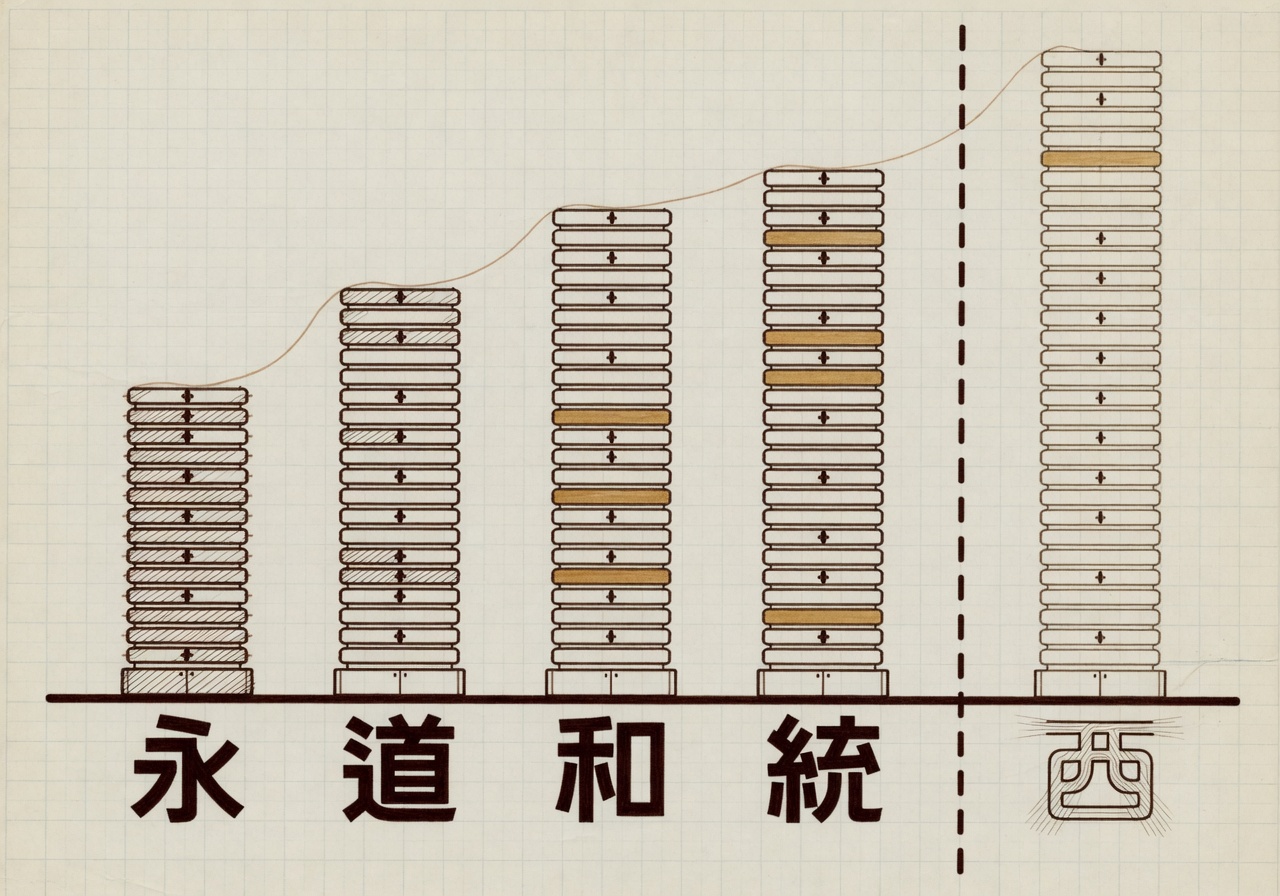

A working software engineer looking at the open-weights leaderboards in May would see four Chinese models within a few benchmark points of each other on agentic coding tasks, each released within twelve days of the next. Z.AI shipped GLM-5.1 on April 7. Moonshot followed with Kimi K2.6 on April 20. DeepSeek’s V4 Pro arrived on April 24. MiniMax’s M2.7 landed in the same window. The cluster is tighter than any month of releases the open tier has produced before. What makes it interesting is not that the models exist. It is that they have converged on a capability band the frontier closed labs still lead on, by a margin that is now small enough to argue about and large enough to matter.

Start with the numbers, because the numbers are where this story usually goes wrong. On SWE-bench Verified, the older and widely contaminated benchmark, the top open-weights score this month is 80.6 percent, posted by DeepSeek V4 Pro Max. On SWE-bench Pro, the harder replacement harness from Scale AI, the same model ties closed frontier scores from the previous generation but trails GLM-5.1 and Kimi K2.6 on the held-out commercial subset. GLM-5.1 lands at 58.4 percent on Pro. Kimi K2.6 lands at 58.6 percent. The four-tenths-of-a-point separating those two is statistical noise; the labs that publish the numbers say so on their own model cards.

The closed frontier still leads. GPT-5.3 Codex sits near 85 percent on SWE-bench Verified and roughly 90 on the harder Pro evaluation, depending on the scaffold. That is a real gap. It is not, however, the gap the trade press was reporting a year ago, when the same comparison showed open models at half the closed score, not three-quarters. Epoch AI, which maintains the most carefully curated public capability index, now estimates the open tier trails the frontier by about three to four months on average, with the gap closing on coding faster than on reasoning.

The inference economics are doing the work

The argument that matters for anyone shipping software is not whether the open models are as good as GPT-5.3 Codex. It is whether they are good enough at a price that changes what a company can afford to build. The published inference rates are blunt: DeepSeek V4 Pro lists at about $2.20 per million output tokens. Kimi K2.6 is cheaper, at $0.95 per million. The same closed frontier the open models trail charges roughly thirty to forty times more for output tokens on agentic coding workloads, depending on the tier. That ratio is the actual news. A capability gap of three months at a cost ratio of thirty-to-one is not a curiosity. It is a procurement decision.

The architectural reason is mixture-of-experts. DeepSeek’s V4 family activates a small fraction of its total parameters on any given forward pass; the model is large in total weight but small in active compute per token. Moonshot, Z.AI, and MiniMax have all adopted variants of the same approach, with different routing strategies and different expert sizes. The technique is not novel. DeepSeek published the architecture in 2024 in the DeepSeekMoE paper, and Google and Mistral were running similar designs before that. What is new is that four labs have now shown they can train and serve MoE models at the scale required to compete on the harder evaluations, and the inference savings flow through to the public price list.

Open-weight models lag behind the most capable models by an average of three months. The gap varies considerably over time, sometimes even closing completely. — Epoch AI, Capabilities Index analysis of open-weight vs. closed-weight models

What the cluster does not tell you

It would be easy to read the convergence as evidence that the open tier has caught up. Three things complicate that reading. The first is benchmark sensitivity. SWE-bench Pro is harder than Verified, but it is still a software-engineering benchmark; the field has very little public evaluation data on the kinds of reasoning tasks closed labs increasingly emphasize, and even less on long-horizon agentic work where the scaffold matters as much as the model. The 58-point cluster on Pro tells you something real about coding. It does not tell you whether the same models can run a multi-step agent across a week of work without losing the thread.

The second is that none of these labs have published the full training-data composition for the models in question. The community has reverse-engineered enough to be confident the models trained heavily on GitHub and on synthetic coding traces, but the licensing status of substantial portions of that data is unresolved. The closed labs are in the same position on this question; the difference is that open weights are auditable, and an enterprising researcher with a few weeks and a hard drive can probe the models for memorized training material. That work is now beginning to appear, and it is worth watching.

The third is the policy overlay. The U.S. Department of Commerce’s January rule shifted advanced-chip exports to China to case-by-case review, and the AI OVERWATCH Act advanced out of the House Foreign Affairs Committee on January 21 would prohibit sales of NVIDIA’s Blackwell generation to China for two years. The current crop of Chinese open-weights models was trained on hardware acquired before those rules tightened. Whether the cluster repeats itself in 2027 depends on whether the labs can train successor models on whatever combination of domestic accelerators, prior-generation NVIDIA inventory, and overseas compute they can assemble. The export-control thesis has always been that compute scarcity slows training. The open-weights cluster is the evidence the thesis is, at minimum, contested.

Why this matters to readers who are not buying inference

For a developer choosing a model to build with, the practical implication of the cluster is that the open tier is now a defensible default for coding workloads where the gap to GPT-5.3 Codex is acceptable and the cost ratio is decisive. For a CIO running a procurement cycle, the implication is the same, shaded by support and liability terms the open labs do not offer in the form a Fortune 500 legal department wants. For a policy reader, the implication is harder. The story the export controls were written to prevent is not exactly happening, and not exactly not happening; the Chinese open-weights labs are producing competitive models, but it is producing them at a generation that the U.S. closed frontier still leads. Whether that gap holds, narrows, or inverts is what 2027 will answer.

The honest framing, in the meantime, is that the open tier has converged on a band the closed frontier still leads on. The convergence is real. The lead is real. Either by itself is misleading. The pair is the story.