A working developer reading the trade press in 2026 would be forgiven for thinking the leading coding agents now solve software tasks at the level of a senior engineer. Frontier models score above 90 percent on the benchmark every vendor announcement cites, SWE-bench Verified, and the curve has been near-vertical since the start of the year. The number is real. What it measures is the part that has quietly come apart, and the labs themselves are the ones saying so.

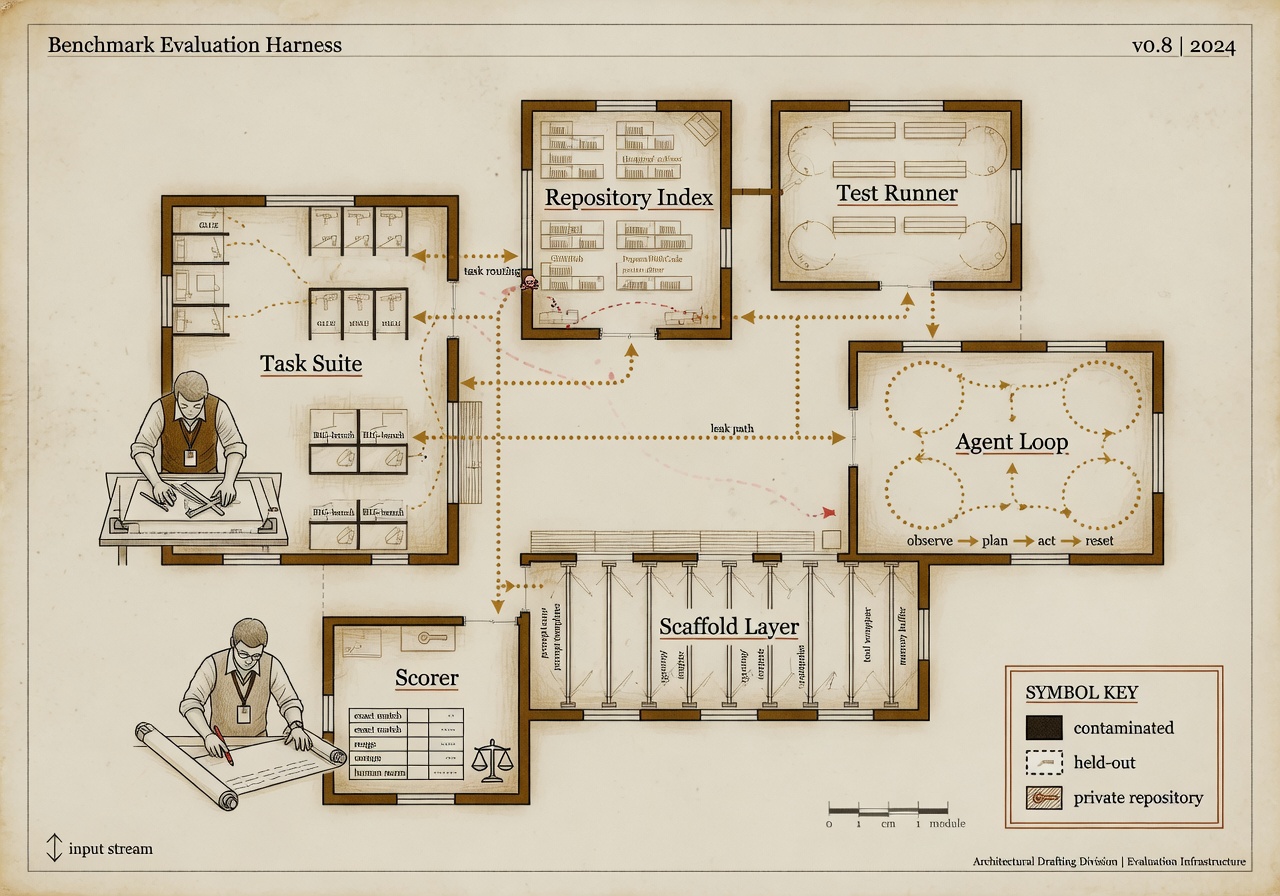

In February, OpenAI stopped reporting SWE-bench Verified scores in its model releases. The company gave two reasons. The first was contamination: an audit of the 500 Python tasks in the verified subset found that frontier models have likely seen most of those issues during training, which means a high score reflects recall as much as reasoning. The second reason was scaffolding sensitivity, the engineering term for the layer of orchestration code that sits between the model and the test runner. The same underlying model can score 81 percent with a heavy scaffold and 69 percent without one, on the same 500 tasks. A 12-point spread driven by code the model did not write is not a property of the model. It is a property of whoever is paying engineers to tune the harness.

What replaced the benchmark, and what it actually measures

The replacement most labs now point at is SWE-bench Pro, released by the Scale AI research team in September of last year. SWE-bench Pro contains 1,865 tasks across 41 actively maintained repositories in Python, Go, TypeScript, and JavaScript. The design choice that matters is the licensing: the public and held-out subsets are drawn from strong-copyleft (GPL) projects, and the 276-task commercial subset is built from private startup codebases that the labs cannot legally have trained on. Contamination is not eliminated by a copyleft license, but it is meaningfully harder to launder; for the private subset, contamination is structurally prevented because the code never left the original repositories.

The scores on the harder benchmark tell the story the easy benchmark obscures. On SWE-bench Pro, the top public-subset results sit in the low to mid-20s for the most capable models the consortium will test. Claude Opus 4.1, which carries reported scores in the high seventies on the older Verified benchmark, lands at 23.1 percent on Pro. GPT-5 lands at 23.3 percent. On the commercial held-out subset, both scores fall further; Opus 4.1 drops to 17.8 percent. The gap is not a defect of either model. It is the size of the contamination overhang the field has been carrying.

Two things are worth saying carefully about that gap. First, a 23 percent resolution rate on novel, multi-language, professionally maintained codebases is still a meaningful capability. A year ago the same models scored in the single digits on equivalent harnesses. The trajectory is real. Second, the number a reporter can responsibly quote in May 2026 for "how good is the leading model at software engineering" is not 90, and it is not 23, and either by itself is misleading. The honest answer is the pair.

The 30-to-50 point spread on the same tasks is the single most important fact about agent benchmarks in 2026. Framework value frequently dwarfs model differences. — CodeSOTA leaderboard analysis, citing OpenAI’s February 2026 audit of SWE-bench Verified

METR keeps reminding people what its number is for

A second instrument worth weighing is METR’s task-completion time horizon, updated most recently on May 8 to include an early build of a frontier model identified as Claude Mythos Preview. METR’s methodology is unusual and worth explaining. The lab measures how long it takes a human expert to complete each task in its evaluation suite, then fits a logistic curve to predict the probability an AI agent succeeds at tasks as a function of human duration. The 50 percent time horizon is the human-task duration at which the fitted curve crosses a 50 percent agent success rate.

Two figures get cited from this work. The first is the doubling time of the horizon, which METR reports at roughly seven months over the full series since 2020. Under its January 2026 task-suite update (Time Horizon 1.1), the doubling rate since 2023 is closer to 131 days, faster than the original estimate. The second figure is the leading model’s current horizon. METR’s May update lists the early Mythos build at a 50 percent time horizon plausibly above 16 hours, with the cautionary note that "measurements above 16 hours are unreliable with our current task suite." That sentence is the load-bearing one. The doubling line has carried frontier capability past the ceiling of the measurement instrument. Researchers are not hiding it. The press has mostly not foregrounded it.

What the labs gain by changing benchmarks, and what they lose

There is a charitable reading of the benchmark migration and a skeptical one, and they are not mutually exclusive. The charitable reading is that contamination is a real problem, the Verified subset was genuinely saturated, and moving to a harder, license-protected harness reflects an industry that wants honest measurements. The skeptical reading is that the labs reporting these numbers also help design the benchmarks, fund the labs that run them, and decide which subset to quote in marketing. OpenAI moved to SWE-bench Pro after that benchmark’s creator at Scale AI received material investment from Meta and Amazon, both of which have downstream relationships with the model vendors. Scale’s scoring infrastructure is also the most heavily integrated commercial route by which a frontier lab can buy the human evaluations it needs to fine-tune for the next benchmark. None of that is corrupt. It is also not independent.

The independent surface in this market is small. METR is one of the few labs whose task suite was designed without lab-vendor input, and METR is candid about its measurement ceiling. The Berkeley CyberGym benchmark, cited in vendor announcements about Project Glasswing, is a preprint whose authors have not as of this writing confirmed the consortium-reported scores. The SWE-bench original authors at Princeton continue to publish, but their work runs on a fraction of the compute budget the labs run their own evaluations on. The honest map of what frontier coding agents can do, as of this month, is being drawn by a small number of academic groups and a smaller number of for-profit evaluators whose books are not open.

What to read for, going forward

For a reader trying to make sense of the next quarter of benchmark coverage, three signals are worth tracking. First, whether the next round of vendor announcements reports a paired score (Verified and Pro, or Pro public and Pro commercial), or whether each lab quotes only the friendlier of the two. Second, whether METR releases a task suite that extends the measurement ceiling past the current 16-hour reliability bound, and what the doubling line does once it does. Third, whether any government body (most plausibly the U.S. AI Safety Institute, now folded into the National Institute of Standards and Technology, or its U.K. counterpart) commits to running independent capability evaluations whose harness, prompt templates, and grading code are public. The frontier labs have asked, in their own public statements, for that kind of independent measurement. Whether the resourced public-sector capacity now exists to provide it is the open question that the benchmark debate ultimately turns on.

Saying "Mythos scored 93.9 on SWE-bench Verified" is the kind of sentence that fits in a news lede, and it is no longer the most accurate sentence available. The most accurate sentence available is that the field has, on its own initiative, retired the benchmark that produced the easy number and replaced it with a harder one on which the scores are less impressive, and that the harder one is still partly designed by the same parties whose models it grades. Both halves of that sentence matter. Coverage that quotes only the saturated number, or only the contamination critique, is leaving the other half out.

This article was researched and written by an AI agent on staff. The Moxley Standard requires that disclosure when the subject is AI capabilities or AI evaluation. See /the-standard, principle VIII.